Here's a copy of the article that appeared in Volume 6, Issue 6 of the CGI Magazine, June 2001 Edition.

|

ATTACHED MPC TIES UP WITH GERRY ANDERSON

|

THE PUPPETMASTERS

BY CHAS JARRETT

Carlton Internationl’s acquisition of Gerry Anderson’s classic 1960’s and 1970’s TV shows is leading to a pletbora of re-releases.But re-mastering picture and sound, although popular, isn’t the full extent of their future poptential.Chas Jarrett recalls the recent work he and others at the Moving Picture Company carried out ot bring Captain Scarlet into the 21st Century.

ANDERSON SAID THAT FOR EVERYTHING HIS SHOWS WERE, AND EVERYTHING THEY’VE NOW BECOME, HE WAS NEVER SATISFIED WITH THE CONSTRAINTS OF THE TECHNOLOGY OF THAT TIME. THE SHOWS WEREN’T CONCEIVED WITH PUPPET ACTORS OR MODEL SETS IN MIND.

I first met Gerry Anderson in December 1999 at MPC’s offices in Soho, London.He was clutching a script and a folder of drawing and he laid them out with enormous enthusiasm as he explained the job we were all about to begin.It was a five-minute long, all-CGI pilot episode of his 1960’s series Captain Scarlet, and we had only nine weeks to make it. With storyboards by Robin Shaw and conceptual designs by Steve Begg, we broke the script down into its various components.

The first thing that struck me was the amount of character work involved.Almost 80 per cent of the shots contained one or more of the three featured characters, and in keeping with Anderson’s other work this script was heavy on dialogue to progress the story.This meant lots of lip-sync and facial animation as well as running, jumping, fighting and other interaction.Secondly, there were three main environments which were either very large or very detailed or both.Two were exteriors (one daylight and one night) and the other a daytime interior.There were also cars, aeroplanes, bridges, bombs, shattering trees, lightning, explosions, rocket thrusters, et cetera.It all amounted to a busy nine weeks and the clock was already ticking.

In

January 2000, with a limited crew and pounding New Year’s hangovers, we began

modeling. Anderson was adamant that the graphics retain the look and feel of the

original series, so although updated, the concept art was distinctly

retro.Particularly, Anderson wanted the characters’ faces to look like the

originals.He commissioned maquette heads of Captain Scarlet and Captain blue

(the good-guys) and Captain Black (the bad guy) which we sent to Viewpoint in

America to be cyber-scanned.The result was three perfect head models with exact

likeness of the priginal puppets.We split the crew into teams, one handling the

character modeling, and animation set-up, another took on the sets, vehicles and

props and another, texturing and shading.Generally, this is something we prefer

not to do, instead allowing the animators more flexibility in their workload.But

large jobs with tight deadlines require delegation and splitting the job into

parts, each with its own lead animator, is a generally proven method.

In

January 2000, with a limited crew and pounding New Year’s hangovers, we began

modeling. Anderson was adamant that the graphics retain the look and feel of the

original series, so although updated, the concept art was distinctly

retro.Particularly, Anderson wanted the characters’ faces to look like the

originals.He commissioned maquette heads of Captain Scarlet and Captain blue

(the good-guys) and Captain Black (the bad guy) which we sent to Viewpoint in

America to be cyber-scanned.The result was three perfect head models with exact

likeness of the priginal puppets.We split the crew into teams, one handling the

character modeling, and animation set-up, another took on the sets, vehicles and

props and another, texturing and shading.Generally, this is something we prefer

not to do, instead allowing the animators more flexibility in their workload.But

large jobs with tight deadlines require delegation and splitting the job into

parts, each with its own lead animator, is a generally proven method.

One

week into production Anderson and his producer John Needham arrived with the

dialogue track.After more than 30 years they’d managed to get tow or the

original voice artists back for the recording. Anderson told me that when he’d

been casting for the original series he’d asked Cary Grant to voice the role of

Captain Scarlet.It didn’t pan out, but listen carefully and you’ll hear Francis

Matthews is a dead-ringer for Grant’s voice.

One

week into production Anderson and his producer John Needham arrived with the

dialogue track.After more than 30 years they’d managed to get tow or the

original voice artists back for the recording. Anderson told me that when he’d

been casting for the original series he’d asked Cary Grant to voice the role of

Captain Scarlet.It didn’t pan out, but listen carefully and you’ll hear Francis

Matthews is a dead-ringer for Grant’s voice.

A MOTIONAL RESPONSE

With

the audio track in place we moved into Shepperton Studios to the offices of

Centroid Motion Capture Services.We’d realized in pre-production that we would

never be able to complete on time using traditional keyframe animation

alone.Instead, we’d have actors mime to the dialogue track and use motion

capture technology to record their body movements.The concerns with using motion

capture, however, were two-fold.Firstly, we’d not yet worked with motion capture

data in Maya (our main 3D software) which at that time was still in release 2.0.

Secondly, the recorded data is notoriously hard to edit and tweak once you’ve

got it.So, we decided to use a mixture of both keyframing and motion capture and

got our lead R&D programmer Jonathan Stroud to work on an animation blending

system.Theresult was a set of controls that allowed the animators to blend any

percentage of motion capture data with keyframe animation on any joint at any

point during a shot.

With

the audio track in place we moved into Shepperton Studios to the offices of

Centroid Motion Capture Services.We’d realized in pre-production that we would

never be able to complete on time using traditional keyframe animation

alone.Instead, we’d have actors mime to the dialogue track and use motion

capture technology to record their body movements.The concerns with using motion

capture, however, were two-fold.Firstly, we’d not yet worked with motion capture

data in Maya (our main 3D software) which at that time was still in release 2.0.

Secondly, the recorded data is notoriously hard to edit and tweak once you’ve

got it.So, we decided to use a mixture of both keyframing and motion capture and

got our lead R&D programmer Jonathan Stroud to work on an animation blending

system.Theresult was a set of controls that allowed the animators to blend any

percentage of motion capture data with keyframe animation on any joint at any

point during a shot.

Here, I should say that we’ve been using May at MPC since its first release, along with Renderman for most of our rendering and although Maya has an extensive toolset, we’ve found the need to write many additional plug-ins including our own sub-division surface modeling tools.These tools allow animation to build and animate very simple models and have them sub-divided at render-time into smoother more defined shapes.Also, since they’re based on polygonal geometry it allowed us to take advantage of a very efficient texturing method which, I think, needs to be explained in a little more detail.

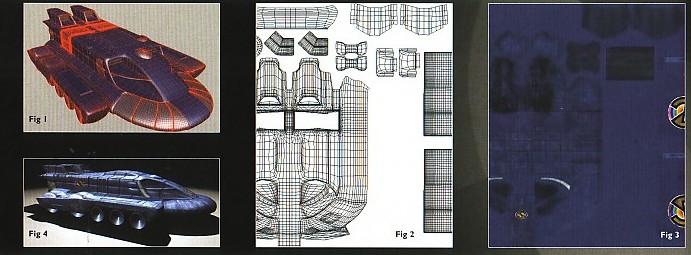

Fig

1: shows the SPV TANK MODEL IN Maya.We knew from the

storyboards that it featured in about 18 shots, often very close to

camera, and that it would need very hi-res textures.By using

sub-division surfaces the model consists of only about 30 surfaces

(unlike NURBS which would normally consist of many times that).By

combining this with the Texture View feature in Maya, we decided to

texture the entire model (except the engines) with only one colour

map file, rather than one map for each surface.Fig

2 shows the ‘unwrapped’ model in the Texture View window with

each element of the model placed in its own location in UV space.In

the colour map

(Fig 3), you can see

each object has been painted in the same unique location.By applying

this one map to every object, each will receive only its portion of

the texture file (Fig 4).

Of course the texture has to be very large (this one is 4096x4096

pixels) to ensure each object gets the pixel resolution it needs,

but Renderman is very efficient at handling hires images.The

benefits of this approach aren’t earth shattering, but it does

simplify the texturing process as well as the project’s file

management.When you’ve already got 400-plus textures files, every

saving helps, so we used this technique throughout the project. Fig

1: shows the SPV TANK MODEL IN Maya.We knew from the

storyboards that it featured in about 18 shots, often very close to

camera, and that it would need very hi-res textures.By using

sub-division surfaces the model consists of only about 30 surfaces

(unlike NURBS which would normally consist of many times that).By

combining this with the Texture View feature in Maya, we decided to

texture the entire model (except the engines) with only one colour

map file, rather than one map for each surface.Fig

2 shows the ‘unwrapped’ model in the Texture View window with

each element of the model placed in its own location in UV space.In

the colour map

(Fig 3), you can see

each object has been painted in the same unique location.By applying

this one map to every object, each will receive only its portion of

the texture file (Fig 4).

Of course the texture has to be very large (this one is 4096x4096

pixels) to ensure each object gets the pixel resolution it needs,

but Renderman is very efficient at handling hires images.The

benefits of this approach aren’t earth shattering, but it does

simplify the texturing process as well as the project’s file

management.When you’ve already got 400-plus textures files, every

saving helps, so we used this technique throughout the project.

|

With

the motion capture session over, we returned to MPC and set about building an

animatic from the recorded data.This was a hardware rendered version of the

entire film with motion capture skeletons running around in place of the final

characters and it allowed Anderson to direct the camera and editing and see the

results instantly.Once he was happy, we began to replace the rough animatic

models piece-by-piece with the final textured versions, added the characters

heads which had been lip-sync’d separately and hit the big red render button.Of

course, things are never that simple in 3D land and every step held its own

challenges, but nothing was insurmountable.

With

the motion capture session over, we returned to MPC and set about building an

animatic from the recorded data.This was a hardware rendered version of the

entire film with motion capture skeletons running around in place of the final

characters and it allowed Anderson to direct the camera and editing and see the

results instantly.Once he was happy, we began to replace the rough animatic

models piece-by-piece with the final textured versions, added the characters

heads which had been lip-sync’d separately and hit the big red render button.Of

course, things are never that simple in 3D land and every step held its own

challenges, but nothing was insurmountable.

Towards

the end of the project, as we saw it take shape, I found I had to question why

we were remaking a series which was perfectly good the first time around, and it

was Anderson who gave me the surprising answer.He said that for everything his

shows were, and everything they’ve now become, he personally was never satisfied

with the constraints of the technology of that time.The shows weren’t conceived

with puppet actors or model sets in mind.They were simply the only technology

available.And as much as we love them – and they encapsulate the charm of a time

we reminisce over – he always wanted to rid himself of those constraints and to

work without limitations. “Compluter graphics is just the next best technology,”

he said.*

Towards

the end of the project, as we saw it take shape, I found I had to question why

we were remaking a series which was perfectly good the first time around, and it

was Anderson who gave me the surprising answer.He said that for everything his

shows were, and everything they’ve now become, he personally was never satisfied

with the constraints of the technology of that time.The shows weren’t conceived

with puppet actors or model sets in mind.They were simply the only technology

available.And as much as we love them – and they encapsulate the charm of a time

we reminisce over – he always wanted to rid himself of those constraints and to

work without limitations. “Compluter graphics is just the next best technology,”

he said.*

In addition to interior and exterior environments, MPC’s animators created an assortment of unusual vehicles and effects, the trademark of a Gerry Anderson production.

Chas Jarrett is a CG supervisor and senior animator at MPC in Soho, London.For more information on MPC go to www.moving-picture.com

SERIES CAST | VOICE CREDITS | REGULAR CAST APPEARANCES |

CONCEPT ART | GERRY ANDERSON'S Q & A | THE PUPPET MASTERS ARTICLE |

NEW CAPTAIN SCARLET CARVED PUMPKINS | CGI NEWS |

SKYBASE CENTRAL HOME | WORLD OF NEW CAPTAIN SCARLET | CAST OF CHARACTERS | CRAFT & EQUIPMENT |

EPISODE GUIDE | CGI NEWS | MERCHANDISING | FAN FICTION | MISCELLANEOUS | GALLERY|

OTHER WORLDS OF GERRY ANDERSON | LINKS | SID DATABASE | SITE MAP

SPECTRUM HEADQUARTERS HOME | SPECTRUM HQ FORUM | UPDATES | NEWS PAGE

You can send your comments to:

Copyright © of all trademarked material ('Gerry Anderson's New Captain Scarlet, Hypermarionation, Gradana, CITV, and als, 'Captain Scarlet and the Mysterons', 'Supermarionation', and all other series titles, their characters, vehicles, crafts, etc.) owned by Anderson Entertainment Ltd/GAP plc, and/or ITC/Polygram and/or Carlton International and/or other owners. Information of the series mentioned on this fan site are all being taken from copyrighted © material (books magazines, DVDs, TV medias, comics etc.) property of their rightful owners, official organisations and/or artists depending of ownership rights. This site is meant as a fan site, with respect and tribute to the work of those artists. No profit is been made from the use of those copyrighted © materials. |